Many times while working with SEO, we come across the word called Robot.txt. So, what is use of this file and why it is the most recommended one? Today, we are going to discuss the importance of this Robot.txt file and reveal the secret as to why it is so important for SEO?

Many times while working with SEO, we come across the word called Robot.txt. So, what is use of this file and why it is the most recommended one? Today, we are going to discuss the importance of this Robot.txt file and reveal the secret as to why it is so important for SEO?

What is Robot.txt?

Robot.txt file informs the search engines about the website pages that must be accessed and indexed and which ones should not be accessed. Let’s understand this with an example.

Example: If you mention in your robot.txt file that certain pages should not accessed by search engines then those pages won’t be visible in the search results as they won’t be visible to the visitors. This is really important to maintain the privacy of your website.

Our today’s blog post will focus entirely on developing a perfect robot.txt file:

Use of Robot.txt:

First of all, let’s understand how search engines gain information about your website. So, the process is like this: Many small programs called Spiders or robots are released by search engines to identify your site and get all the details about it. This helps them to bring all the information about your website so that they can be made available in the search engine results and be useful to the visitors.

Your website uses a robot.txt file that provides instructions to these small programs regarding which pages should be made visible to the search results and which ones should not be. So,

here’s how robot.txt exactly works:

User agent: *

Disallow: /portfolio

This will prevent all search engine robots from visiting this page on your website.

But, in order to make this command work in a proper way it is important to have this command :

“User agent:* “as this specifies the particular robot that must be blocked from visiting the particular page.

For example: If you use “User agent: Googlebot” then it will block only google robots from accessing this page.

Some Pages must be blocked:

Due to some reasons, it becomes necessary to block some pages with robot.txt file. One such reason is to get duplicate web pages blocked as duplicates should never be indexed in order to avoid any damage to your website’s SEO.

Another thing is to prohibit the robots from accessing certain web pages in order to maintain privacy of the website and avoid users to access any confidential information.

Developing a robot.txt file:

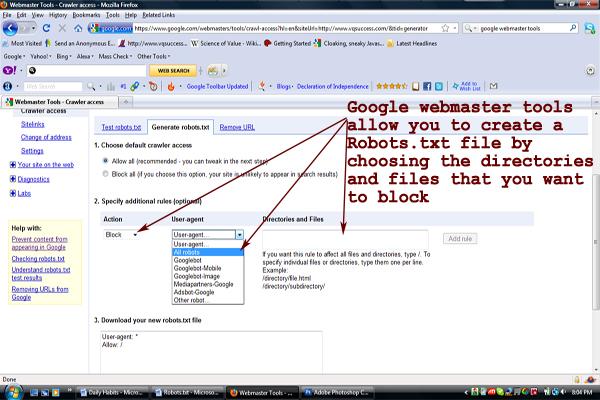

With Google’s free Webmaster tool, it becomes very easy to create a robot.txt file using the “Crawler access” option available under “Site configuration” in the menu bar. Here, you can select the option

“ Generate robots.txt” and create a simple robot.txt file as mentioned here: